Overview

This thesis explored how to improve Grey Wolf Optimizer behavior in a discrete scheduling setting.

The project focuses on the Job Shop Scheduling Problem, where operations from multiple jobs must be assigned to machines under precedence and capacity constraints.

The objective was to reduce makespan while balancing exploration and exploitation more effectively than a baseline discrete GWO implementation.

Problem framing

JSSP is a classic constrained optimization problem where small search changes can have large schedule consequences.

- Jobs contain ordered operations that must respect precedence.

- Each machine can process only one operation at a time.

- The main metric is makespan: the total time needed to finish all jobs.

Approach

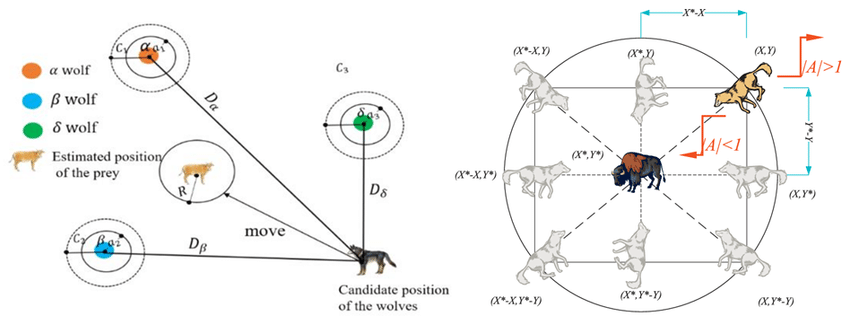

The work starts from a hybrid GWO adapted to the discrete JSSP space and then introduces additional search controls.

- Use crossover-like operators to translate alpha, beta and delta guidance into discrete candidate schedules.

- Apply Variable Neighborhood Search as a local-improvement stage.

- Use adaptive mutation and initialization rules to keep the search from collapsing too early.

What I added

- Per-machine swap mutation to refine local sequences while respecting structure.

- Duplicate control to detect and replace near-clone individuals and preserve diversity.

- A modified VNS acceptance rule that allows equal-quality moves when they shift the population into new regions of the search space.

Key results

- The final setup improved mean RPD over the baseline.

- More instances reached best-known solutions on the Lawrence and Fisher-Thompson sets.

- POX plus best-child selection remained slightly stronger in final quality even if PPX reduced fitness evaluations.

What I learned

- Good initialization helps, but sustained diversity is more important than it first appears.

- Allowing equal-fitness moves in VNS can improve exploration without damaging final quality.

- Search operators that look elegant on paper still need to justify themselves in total compute budget.

Next steps

- Guide mutation rates with population similarity measures.

- Add Tabu Search to filter revisited moves and save evaluations.

- Explore discrete GWO variants and multi-objective extensions for energy or due-date constraints.

Next move

Want to compare it with more applied work?

The portfolio moves from research-style optimization to practical ML systems and automation projects, all through the same interface pattern.